Mass Global Mix-up

“Mass public shootings are rising faster around the world than in the United States!”

“Mass public shootings are rising faster in the United States than the rest of the world!”

Stuff like this makes me dislike Twitter even more than I normally do. That I saw these contradictory statements, each citing “real data,” within 60 seconds of one another was my first clue that the pro- and anti-gun camps were shouting past one another and that somebody was not paying attention.

Why the division? It comes down to definitions and data sources.

The Data Disagreement

Two academics – John Lott and Adam Lankford – have published papers that calculate the number of mass public shootings (MPSs) around the globe. These papers come to different conclusions and have caused one of these two to abandon the normal congenial language normally bandied by studied men and women.

Geek smarminess aside, we see that these gents presented:

- Different papers

- Taking different approaches

- Using different datasets and

- Using different definitions of MPS

By doing so, they provided ideologues everything they need to keep the public confused about guns and violence. That’s why the Gun Facts project exists – to eradicate confusion.

In This Corner …..

John Lott at the Crime Prevention Research Center published his paper 1, which basically concluded that MPSs were globally more frequent elsewhere and that their rate on a per population basis was rising faster outside of the US [ED: We’ll note that in an earlier paper 2 he concluded the same; but the Gun Facts project noted that on a per capita basis, American MPSs were producing more deaths per instance over time – in other words, American mass murderers were getting better at their craft].

In 2016, Adam Lankford published his paper 3 and later produced an updated version [2019, though we could not obtain that document]. He concluded that private gun ownership was a primary driver in MPSs and that the US was the most common place for MPSs.

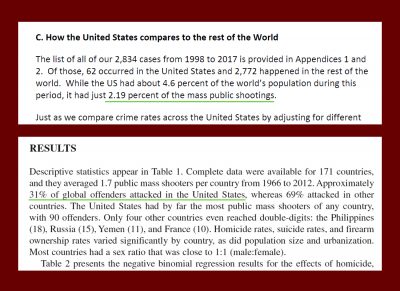

How far off were they? Lott’s data had the US with about 2.2% of the planet’s MPSs and Lankford said 31%. In other words, Lankford’s data said the US rate was 14 times higher than Lott did.

That’s a big gulf. Here’s why:

What You Count and How You Count It

These studies are very different, with one key difference that… well… makes all the difference.

| Lott | Lankford | |

| USA % of Global Mass Shootings | 2% | 31% |

| Perpetrators | Any | One |

| Span | 1998-2015 | 1966-2012 |

| Sources |

University of Maryland Global Terrorism Database Nexus Web |

New York City Police Department’s 2012 Active Shooter report FBI’s 2014 active shooter report |

| Languages | 6 | 1 |

| Total MPSs reviewed | 2,834 | 292 |

| Locale | Public Places | Hybrid |

| Gang/drug/crime nexus | Excludes | Excludes Some |

| Scope | 4+ dead, single location | 4+ dead, single or multiple locations |

| Terrorism Related | Includes | Excludes |

Here is a brief table of the notable differences between the two. There is much to discuss, but let’s start with the pachyderm in the parlor.

The data sources are about as different as one could imagine. Lott used data sources with global reach and for a study period that included mass global adoption of the internet by the media (MSNBC was launched in 1996). Contrarywise, Lankford used two files from strictly American sources, neither of which were tasked specifically with tabulating global shootings. In the case of the NYPD report, they used English-only websites. To quote the NYPD report, “The NYPD chose to restrict quantitative analysis to cases that took place within the United States because the NYPD limited its internet searches to English-language sites, creating a strong sampling bias against international incidents.” [emphasis ours]

The FBI report uses American data only. They didn’t bother to look outside the borders.

This alone makes the Lankford study quite useless for making multinational comparisons. But believe it or not, the matter gets even worse.

Both of Lankford’s data sources are English-only. Pulling from websites that are in English (as the NYPD report did) would address readers that are at best at 7% of the world’s population. There is some multination leakage given parts of the world where English is the most common second language (e.g., India) but it is still quite limited. Lott, on the other hand, hired people with six different languages to search non-English websites. By including Chinese (1.4 billion people) and Spanish (nearly all of Latin America) the scope was greatly expanded. Had Lott included Hindi, his coverage would have been even better than the 35% of the global population his language choices did cover.

Another big variable was locale. Lankford relied on “active shooter” datasets. Active shooters include people who kill at multiple locations. A madman who shoots his neighbor, then crosses town and shoots his boss, then drops by his in-law’s and kills both of them is an active shooter with four dead. However, the common definition of “mass public shooting,” and the one Lott uses, is an event at a single location. Note that both of Lankford’s data sources were “active shooter” reports and not “mass public shooting” databases.

There is also the issue of definition about MPS. The common and enduring definition of a MPS 4 does not discriminate on the number of perpetrators. In all common MPS databases, there are instances of MPS where there was more than a single attacker (e.g., Columbine, Jonesboro, San Bernardino, Orinda, Kansas City). Lankford appears to restrict his analysis to single shooters, thus creating a new and uncommon definition of MPS and an apples to pineapples comparison with just about every other MPS research effort.

Lastly, and a bit of a picked nit, is that the NYPD active shooter report upon which Lankford based his study goes back to 1966, long before the internet existed, much less had been commercialized. To rely on web searches for ancient data is much more hit-and-miss than doing so for recent news reports. Lott confined his research to post-Internet periods and thus had a more pristine dataset.

And The Winner Is …

Both pro- and anti-gun factions have grabbed one or the other report to justify their biases. At the Gun Facts project, the only side we take is accuracy’s.

To be blunt, the Lankford study leaves nearly everything to be desired. The data sources are insanely restricted and woefully incomplete. In a perfect world, a researcher could hire speakers of every language to search for every known MPS, but Lott’s study did cover 35% of global language use and thus his dataset ain’t shabby.

Research accuracy is based on two primary elements: data quality and methodology. The data quality in Lott’s research is clearly superior. Likewise, Lott’s methodology for data identification and collection is far [far, far, far] better. Though neither report suffers from computation inadequacies, with Lankford’s we face the old computer programmers’ lament of “garbage in, garbage out” – namely, if the data quality stinks, so do the conclusions.

Notes:

- Comparing the Global Rate of Mass Public Shootings to the U.S.’s Rate and Comparing their Change Over Time; Lott; Crime Prevention Research Center; 2020 ↩

- How a Botched Study Fooled the World About the U.S. Share of Mass Public Shootings — U.S. Rate is Lower than Global Average; Lott; Crime Prevention Research Center; 2018 ↩

- Public Mass Shooters and Firearms: A Cross-National Study of 171 Countries; Lankford; Violence and Victims, Volume 31, Number 2; 2016 ↩

- Multiple Homicide: Patterns of Serial and Mass Murder; Fox, Levin; Crime and Justice, Vol. 23; 1998 ↩

So, what you’re saying about English speaking locals, wouldn’t include Kalifornia. OK, I’ll go sit in my corner!

Bill

If nothing else, the presence of an armed citizen (armed pursuant to standards of Colonel Cooper) at each MPS would favorably mitigate the outcome.

Thank you for the analysis on these two studies. Great article.