Kids, Carrying and Con Jobs

The media was lightly abuzz, echoing without critical review a study 1 that said states with strict gun control laws had fewer kids carrying guns.

As with all studies the media fails to study, there is far less here than meets the eye. The report itself has too many lapses to even consider it worthwhile, except perhaps as a case study in poor research methodology.

The major defects to this study include:

- Raw data source and its collection are suspect.

- Major omissions of U.S. states and territories.

- Selection of specific years that ignore trends in juvenile gun misuse.

Raw Data, Unintentionally Cooked

Let’s ignore using the Brady Campaign Scorecard – unlike the authors of the study currently under my microscope – as the definition of good/strict gun control laws. We have repeatedly shown that Brady scores have no correlation to violent crime.

The real problem is less with the Brady Scorecard and more with how the rate of juveniles “carrying” guns was made.

This study turned to the Youth Risk Behavior Survey, a product of the Center for Disease Control (CDC). This ongoing survey attempts to identify risky behaviors in young people (which if my childhood was any indication includes just about everything I did). This research involves sending a questionnaire to school students. In terms of knowing if kids are carrying guns in an endangering way, this methodology has several defects:

ATTENDENCE: School attendance varies greatly by urbanization and economics. In other words the truancy and drop-out rates are much higher in cities with inner-city street gangs. These also happen to include states with stricter gun control laws. Hence, there is a built-in measurement problem when it comes to guns.

QUESTION: Does the question “on how many days did you carry a weapon such as a gun” have a different meaning to a gang-banging juvenile and a rural kid who frequently goes hunting? Yes, it does. So the ambiguous question creates problems all on its own.

TRUTHINESS: Do students answer surveys honestly, especially school administered questions concerning illegal activities? Someone with a pistol in their pocket might answer a question about carrying firearms quite differently that a 16 year old from Arkansas who just bagged his first white tail deer.

Since the study under scrutiny treats this survey as a gold standard, and uses no other basis for measuring how often juveniles carry guns illegally or recklessly, the study rapidly becomes farcical. Indeed, one of the biggest gripes about this study is why the authors studiously avoided crime statistics, such as the number of arrests or convictions of juveniles for illegal firearm carry or use (more on this below). Being arrested for weapons violations is a very direct and statistically robust endangering behavior (unless you wish to count shooting bucks during hunting season endangering, which to the buck I suppose it is). This is what the authors should have used, which is likely why they didn’t.

MIA States and Districts

Even if the raw data collected from the Youth Risk Behavior Survey was sound for this purpose, it is glaring that only 38 states are reported in the cherry picked years this study used. Among the missing is California, which includes LA County, which is home of the original Gangster Paradise and the well hole of wholesale homicide with guns, including homicide by juvenile gang members.

This is important because California ranks as #1 for strict gun control laws.

Ponder this a bit more. The state with the strictest gun control laws – and which includes Los Angeles, a city in the top 20 U.S. cities for homicides – is not represented in this study. Neither is Washington D.C., which retains the title of the nation’s homicide capital. That the Youth Risk Behavior Survey doesn’t capture this data is not the fault of the authors of the study I dissect. But using this data is their fault, and a glaring (perhaps intentional) one.

Declining Juvenile Gun Crime

What the study does not measure is if this is a growing problem (not that this study could, given the reprehensible methodology). The good news is that juvenile weapons crime is a shrinking problem.

Recall that I suggested using crime statistics instead of survey data. The Bureau of Justice Statistics has a lot of gooey numbers concerning crimes and guns. As expected, they even note what the trends are concerning weapons and juveniles, and show that the rate of firearm law violations (such as carrying in public) is on the decline 2. In fact, in the last six years of available data, the rate of weapons offenses for juveniles has fallen almost 50%. Things are getting better on a national basis … except in California (the reduction was only 41%). D.C. doesn’t report those numbers at all.

Recall that I suggested using crime statistics instead of survey data. The Bureau of Justice Statistics has a lot of gooey numbers concerning crimes and guns. As expected, they even note what the trends are concerning weapons and juveniles, and show that the rate of firearm law violations (such as carrying in public) is on the decline 2. In fact, in the last six years of available data, the rate of weapons offenses for juveniles has fallen almost 50%. Things are getting better on a national basis … except in California (the reduction was only 41%). D.C. doesn’t report those numbers at all.

Interestingly, the authors of this rapidly fading “study” selected survey years 2007, 2009 and 2011. Yet the survey was also conducted in 2013. This is important because over time, more and more states have participated in this somewhat crippled survey. Had the authors used the last three years, their data would have been much more robust though no less inaccurate.

So let’s be clear: The goal is to measure endangerment. Actual, real, occurring firearm misuse. This data is collected daily by law enforcement across the country and has been for decades. It is available to anyone. Yet the authors sought data that inaccurately and indirectly measured nothing of the sort. Speaking of crime, I think we saw one committed in this study.

What Does Crime Tell Us

Had the authors used juvenile weapons crime data instead of inaccurate, limited and indirect survey methods, they would likely have come to a different conclusion.

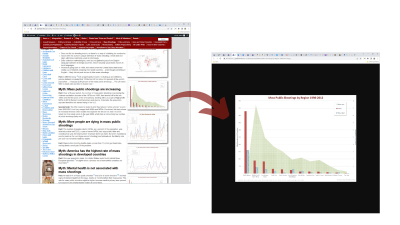

Funding limits do not permit a full analysis at this time (send Gun Facts some money and we’ll add it to the project list). So we quickly grabbed data for the states with the strictest and least strict Brady Campaign scores, and for the United States as a whole, and the juvenile arrest rates for weapons charges from the FBI. We also looked at annual data covering 12 years, up to the most recent FBI reporting year their online database presented.

California, with the strictest gun control laws in the land, has a substantially higher juvenile arrest rate for weapons offenses. By substantially higher, I mean nearly 70% higher than the United States and nearly 400% higher than Arizona, the state with allegedly the most lax gun control in the nation. Instantly we see not only the original sin the media missed (e.g., omitting big problem states like California) but also that the basic hypothesis of the study appears to be inaccurate when exposed to the harsh light of hard data.

A quick aside about this chart: Please take note that after years of national decline in such offenses, California has yet to have a juvenile weapons arrest rate as low as Arizona did a decade and a half ago.

Another to Ignore

This is yet another study worth ignoring because the study was defective from birth, crippled by congenital defects in the source data, the assumptions about strict gun control laws, and even the nature about what was being measured. I just wish the media was wise enough to ignore bad research.

Comments

Kids, Carrying and Con Jobs — No Comments

HTML tags allowed in your comment: <a href="" title=""> <abbr title=""> <acronym title=""> <b> <blockquote cite=""> <cite> <code> <del datetime=""> <em> <i> <q cite=""> <s> <strike> <strong>