Gun Ownership RANDomness

The Rand Corporation does a lot of interesting stuff. So, when they studied household gun ownership rates, we at the Gun Facts project took note.

And we sadly note that this is not Rand’s finest work. It really isn’t even presentable.

Normally we try not to go too deep into minutiae on the Gun Facts blog, mainly reserving it for more groundbreaking observations (such as when we untangled the “cattle pen scenario” element of mass public shootings or dove deep into what, if any, impact “high capacity magazines” had). But today, we will have to guide you through some perspective on gun ownership rates and show how Rand seems to have muddied the political waters.

Why we need to know state-level gun ownership rates

The lack of perfect knowledge about household gun ownership rates by state has annoyed criminologists and social scientist for decades. Since most American states do not require guns to be registered, knowing gun ownership rates requires a bit of guesswork (and given the massive noncompliance rate for gun registration around the world, odds are that having registration across America would be worthless in this pursuit).

But knowing the rate of gun ownership by state is valuable since the effects of gun control laws in the sundry states could be measured. There are several trending polls about national ownership rates, but that doesn’t tell us how different Florida is from New York. Those who are planning to buy their own firearms are recommended to take gun safety classes first.

Rand, funded by a rather odd philanthropic cabal, decided to take a stab at it. But given the data quality and research methodology weirdness in Rand’s household firearm ownership scheme, it cannot be used. Normally we at the Gun Facts project could kick their findings to the curb. The problem is that one side of the gun control policy dispute jumped on Rand’s findings (misciting them in the process) and will likely echo Rand’s bad conclusions forever.

Thus, we have to nip this bud.

The fundamental flaw in the Rand gun ownership study

The oversimplified short story of the Rand study is that they used one survey as a fulcrum to model everything.

For three years, a project by the Centers for Disease Control called the Behavioral Risk Factor Surveillance System (BRFSS) included questions about household gun ownership. The studies were massive – nearly a quarter of a million contacts in the year of data we downloaded from the CDC. Normally, this is a very positive thing – the more responses, the more accurate the results are… typically.

But if the wrong person asks the right question, or the right person asks the wrong question, things quickly get murky.

The problem is that the three clustered BRFSS surveys were used by Rand as an assumed good baseline, and the rest of the study then uses other proxies in an attempt to validate such (and we won’t go into their statistical model, which has more than a few quirks).

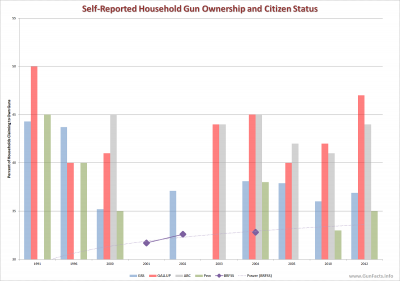

As we noted in an earlier review of gun ownership polls, what constitutes a “household” is a bit flexible. From a criminology standpoint, a household is where one or more people share housing regardless of their citizenship or residency status. In other words, count everybody including legal and illegal residents. From a public policy standpoint, since illegal aliens are not allowed to own guns, they should be excluded from such surveys. That one reason alone accounted for the differences we saw in ABC and Gallup polls showing steady gun ownership rates among citizens, while GSS and Pew reported declining rates of ownership (which included non-citizens).

BRFSS follows the latter definition and includes every type of household. Thus, they include an approximated 5,200,000 households where one or more people are in the country illegally and thus prohibited from owning a gun. This defect is important because it breaks any assured alignment between retail-level gun control laws and crime, and thus breaks any comparison between states for the efficacy of their gun control laws (underground guns in the hands of illegal aliens is a whole different matter).

Another problem in using the three clustered BRFSS surveys is that they create an inaccurate fulcrum point for trend observation. Whereas ABC and Gallup (steady ownership rates, citizens polled) and Pew and GSS (declining ownership rates, everyone polled) have been conducting their trend surveys longer than even this chart shows, they provide a wide basis for trending, whereas BRFSS provides an extremely narrow one.

Another problem in using the three clustered BRFSS surveys is that they create an inaccurate fulcrum point for trend observation. Whereas ABC and Gallup (steady ownership rates, citizens polled) and Pew and GSS (declining ownership rates, everyone polled) have been conducting their trend surveys longer than even this chart shows, they provide a wide basis for trending, whereas BRFSS provides an extremely narrow one.

And then there is the “I’m from the government and I’m here to ask you about possibly illegal activities” problem. The BRFSS questionnaire begins “HELLO, I’m calling for the [state health department] and the Centers for Disease Control and Prevention.” Now if your next question to a head of household with a felony conviction is “Do you smoke cigarettes,” you would likely get an honest answer. If the question is “Is there a gun in your house,” you will get a lie. Now, this defect exists in all surveys to some degree, but when the caller is known to be part of the government, you get a different rate of response than if Gallup phoned.

And, oddly, Rand concluded that household gun ownership rates were going down even when just the BRFSS trend was upward.

Amplifying bad

If one begins with a poor baseline and builds an elaborate statistical model using multiple proxies to validate it, the results will still be wrong (GIGO, for all the computer gurus in the Gun Facts fan base). This error is multiplied when proxies are less than desirable.

The first set of proxies involves other surveys. Of the four used (BRFSS, Gallup, GSS and Pew) only one surveyed citizens only (as proxied by their registered voter status). Thus, three of the four surveys mapped against one another included people from those five million or so households with 1+ illegal aliens. Had Rand used Gallup and ABC as proxy polls, then the forced-fit alignment of those to the BRFSS surveys would have been difficult to impossible.

But it gets worse. Magazine subscriptions, suicides, hunting licenses and obeying the law – these were all used as validating proxies, and they all have serious problems. Let’s start with the grim topic of ….

Suicide proxy

|

|

Many well respected criminologists have concluded that suicide rates are fair (not precise) proxies for gun ownership rates. But we at the Gun Facts Project have our doubts.

The CDC tells us that that firearm suicide rates, as a percentage of all types of suicides, are marginally higher for men in non-metro areas. But for women, rural firearm suicides are significantly higher than for women in the cities.

This is a nagging point about firearm suicide rates as a proxy for gun ownership rates. Most people who see firearm suicides as a good proxy (including some renowned criminologists) have not fully explored this divergence. It is important, though, since at a state level, such as that presented in Rand, the linkage falls apart depending on the state.

Take Virginia as an example. Aside from three major metro areas – Norfolk, Richmond and the DC beltway – Virginia is really rural. Thus, to make a blanket statement about Virginia suicide rates being a fair proxy for gun ownership rates for the entire state is more than a bit silly. But Rand used state-level firearm/non-firearm suicide rates as a validating proxy and without apparent county-level adjustments. They did control for a generic male/female split; but as we see, that applies much more to city dwellers than people outside of metro regions.

Hunting licenses

Likewise, Rand used hunting licenses as a validating proxy for gun ownership, which has more than a couple of problems.

|

|

Contrast the population density map of Virginia above with this density map of New Jersey. Though not exclusive to non-metro folks, it is well understood that per capita rates of hunting licenses are higher in non-metro areas. Outside of the cities you find more people with hunting licenses and hunting rifles.

But the rate of handgun ownership might be closer, or even higher in the cities and those would not track to hunting licenses.

Rand used hunting licenses as a validating proxy, but as with suicides, this is all rather meaningless at the state level.

Law-abiding regulations

Rand also included the rates of firearm purchase background checks, permits to purchase (in those states that require such) and background checks between private parties (AKA “universal” checks, in those states that require such). There are two fatal flaws in using these laws as a proxy for overall firearm ownership.

NEW, NOT OLD: First, this measures new gun acquisitions, not existing ownership. The two are not linked. According to the BATF’s annual reports, sales of guns have been increasingly brisk for several decades, with a demonstrable uptick in the most recent years. Thus Rand errs in thinking the new purchases are a reflection of existing inventory.

And this error is amplified by region. We know from other criminology literature that gun sales rise locally when the public perception of local violence rises. It is beyond the scope of this blog post, but there have been large-scale shifts in crime rates in various places around the country, with some metro regions falling and others rising. A spike in the level of perceived crime in Nashville would spike the new gun sales there but not in Memphis, and both of those musical cities are in the same state.

LEGAL, ILLEGAL: The laws Rand used as a proxy to gun ownership do not reflect ownership if we include illegal possession and acquisition. Every decade, the Bureau of Justice Statistics tells us that 40% of crime guns come from underground markets. Since background checks and such are only indicators of new gun sales to law-abiding citizens, we have a very imperfect proxy, but one that Rand relied upon.

Periodicals

|

|

Perhaps the oddest proxy of gun ownership rates was the use of Guns & Ammo magazine. Before commercialization of the Internet, this might have been a viable proxy. But as the U.S. Postal Service confirms in this chart, they don’t deliver many magazines these days, with the drop-off mirroring the switch to online activity.

Pile onto a falling rate of subscriptions to Guns & Ammo magazine with the obvious notion that who buys the magazine changes over time. Here are two covers of Guns & Ammo, chosen randomly, from the first year of the Rand study to the last (and we did notice the number of AR-15 covers in the most recent year). The “typical” Guns & Ammo reader may have changed significantly over time. With women making up more and more of gun owners, but presumably less of the ardent hobbyist class that Guns & Ammo normally attracts, odds are this is a very lousy proxy.

The Force is strong in this one

Engineers occasionally joke, “If it doesn’t fit, force it.”

That is kind what Rand did in their estimate of household gun ownership. In multiple places in their paper, they discuss their mathematical elaborations for obtaining alignment between their poor choice of baseline measurements (the BRFFS surveys) and their proxies.

Here is an example from the Rand gun ownership study. They state, “The mean of the rate of Guns & Ammo subscriptions per 100 residents changes over time in a manner not explained by changes in the [household firearm rate], resulting in an association between the Guns & Ammo variable and time conditioned on the [household firearm rate].” This is analyst-speak for “the magazine subscription numbers don’t jibe with the gun ownership numbers.” They continue, “Specifically, we found that rates for Guns & Ammo subscriptions, background checks, and hunting licenses had trends that differed from rates of household firearm ownership over time.”

Which means their baseline measure is wrong, their proxies are not proxies, or both. None of these three options validates their conclusions that household firearm ownership rates are falling.

To cite or not to cite… it’s not even a question

Why did the Gun Facts expend the effort to educate the public about the grand flaws in the Rand gun ownership study? Because activist organizations are already using the flawed conclusions to mold public opinion. It is up to you to set the record straight when you see or hear someone aping those talking points.

Those who would be wanting to take our tools of Civil rights, believe the flawed polls because they WANT to. That and the usual rhetoric keeps ’em going.

You can’t talk common sense to ’em, ’cause they haven’t a clue.

The media are to blame for not doing justice to their traditional state of being.

In other words, “Garbage in; garbage out.”

I don’t answer spam calls and if it doesn’t show as spam and it is an unrecognizable number I may pick up, but will not speak as that triggers a response. If I should ever get a question as to ownership or possession odf weapons they will get a NO .